Be bold

Inspiring engineers to elevate the world through bold and socially responsible innovation

Be boundless

Inspiring engineers to elevate the world through bold and socially responsible innovation

Be a Baskin Engineer

Inspiring engineers to elevate the world through bold and socially responsible innovation

#2

top public colleges for engineering salaries (Wall Street Journal, 2023)

#2

public university for students focused on making an impact in the world (Princeton Review, 2022)

#5

best game/simulation development program in the nation (U.S. News & World Report, 2024)

AAU

UCSC joined just 65 other universities in the Association of American Universities in 2019

#4

for social mobility, acknowledging our commitment to enrolling and graduating low-income students (U.S. News & World Report, 2020)

HSRU

member of the Alliance of Hispanic Serving Research Universities

#5

of the top 50 Institutions by Total Bachelors Degrees awarded in Computer Science in Engineering (ASEE, 2020)

Overview

A campus of exceptional beauty in coastal Santa Cruz is home to a community of people who are problem solvers by nature: Baskin Engineers.

Founded in 1997 alongside the expanding internet, our school is uniquely positioned to address the challenges of the modern world. At the Baskin School of Engineering, faculty and students collaborate to create technology with a positive impact on society, in the dynamic atmosphere of a top-tier research university.

Welcome to Baskin Engineering

An exciting academic life awaits you

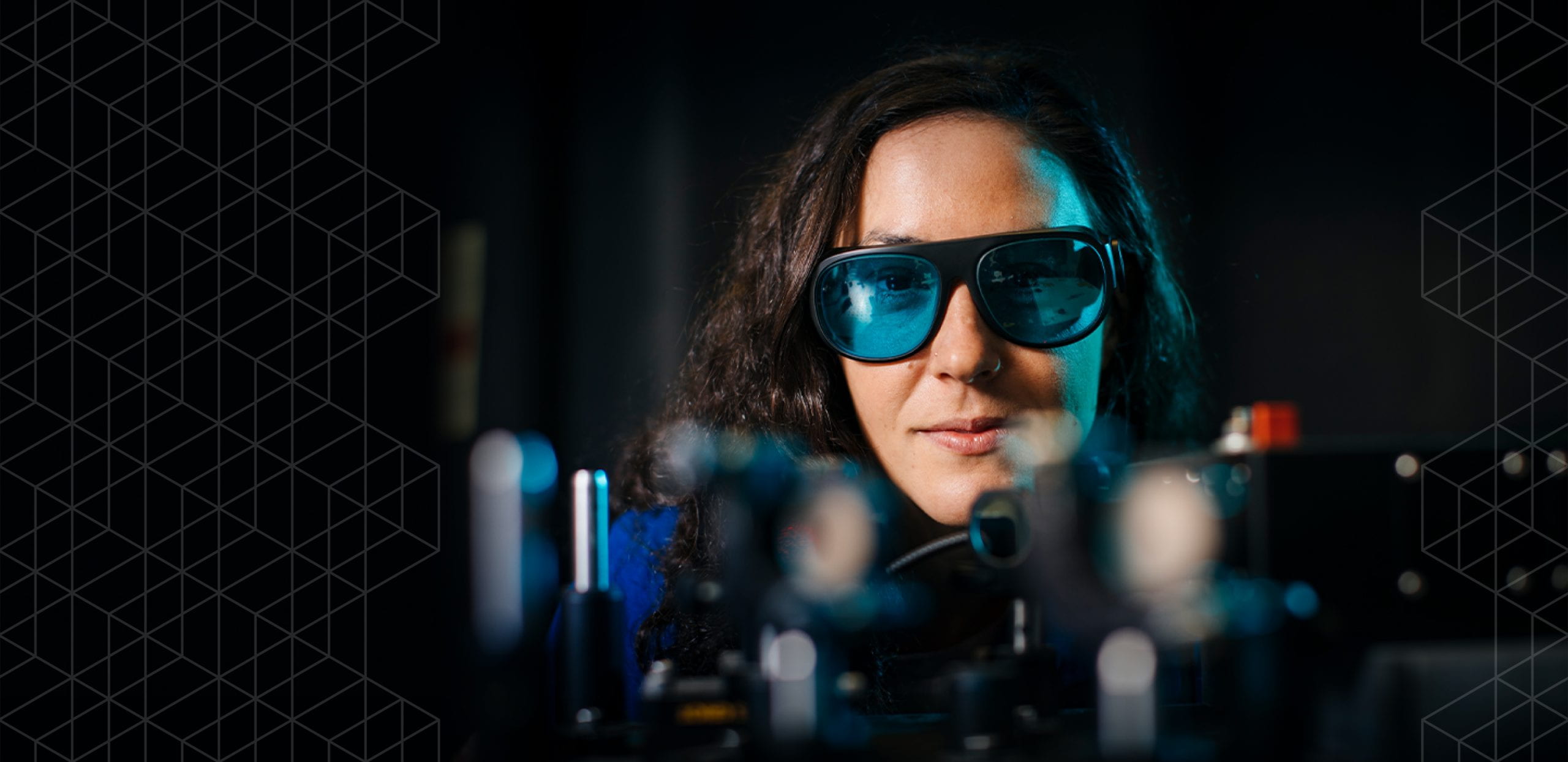

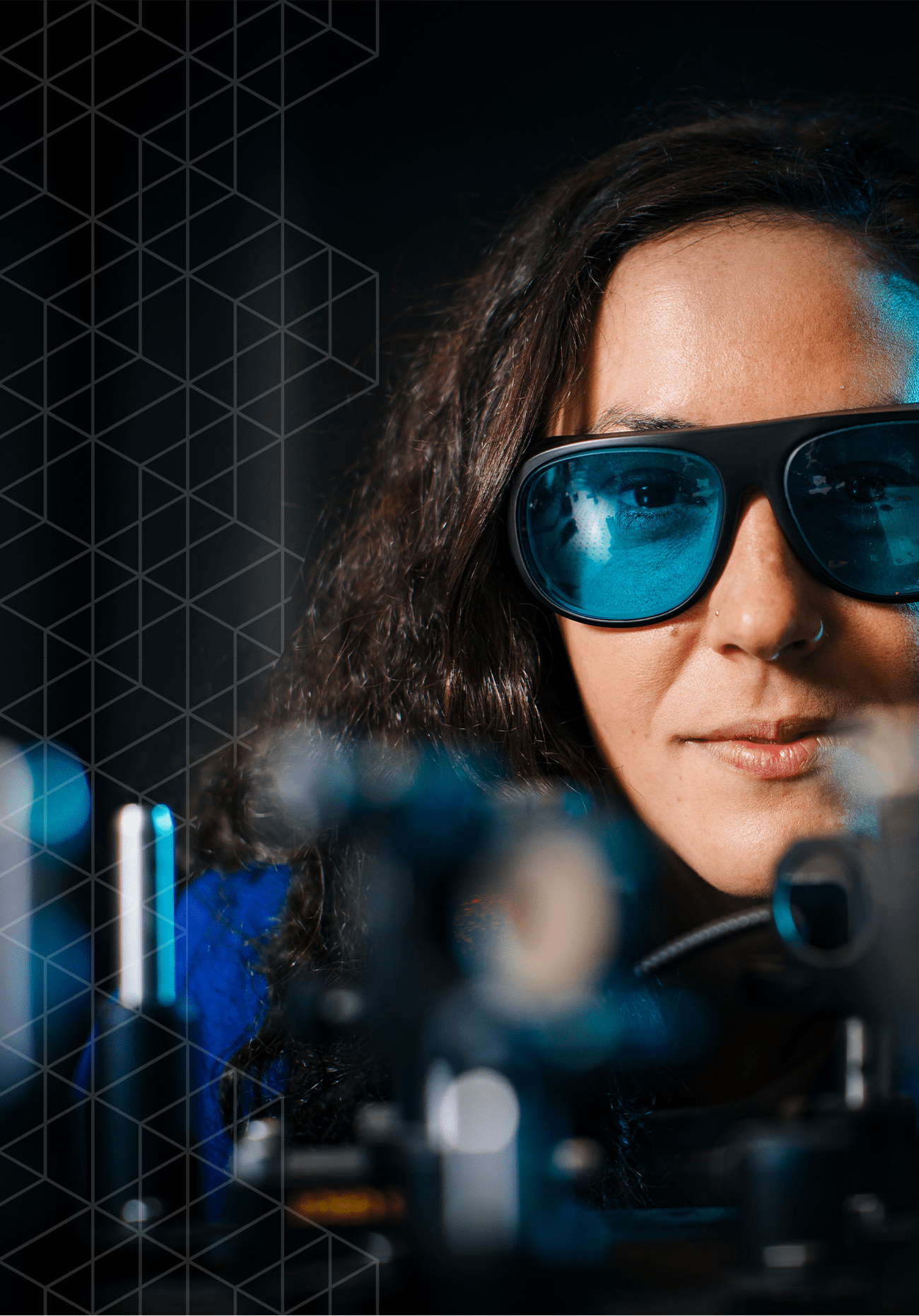

Join a vibrant community of students, faculty, and researchers committed to building a better future. With a focus on socially responsible innovation in areas such as health engineering, climate and sustainability, machine learning, artificial intelligence, games, and human computer interaction, there are endless possibilities to make a positive impact. We can’t wait to see what you will accomplish here.

Inclusion at Baskin Engineering

UC Santa Cruz and Baskin Engineering are committed to creating educational equity that will lead to real, transformative change. We are one of only two institutions in the nation that holds the honor of being a Hispanic Serving Institution, an Asian American Native American Pacific Islander Serving Institution, and member of the Association of American Universities.

Genomics for everyone

UCSC researchers release the first human ‘pangenome,’ better representing our genetic diversity and setting the stage for new discoveries.